The Amnesia Tax

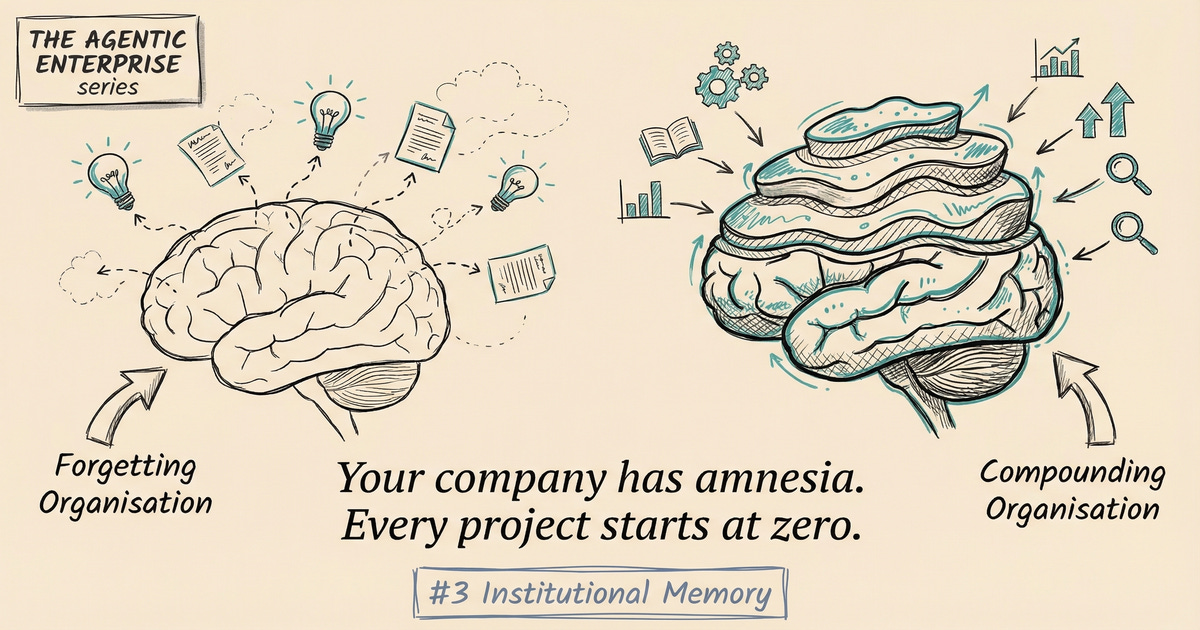

Why your organisation forgets everything it learns, and the compound interest you are leaving on the table

Deep Dive #3 in “The Agentic Enterprise” Series

Last week, I talked with a procurement manager about a recent episode in this team. Fourteen people around a conference table, a shared screen full of sticky notes. The topic: how to renegotiate their largest vendor contract. Forty minutes in, a woman from procurement, grey-haired and visibly tired of this, raised her hand. “We did this exact exercise eighteen months ago,” she said. “I wrote a twelve-page summary. Nobody’s read it.”

Nobody had. The document lived in a Confluence space alongside 4,300 other pages, buried under layers of outdated process guides and meeting notes that accumulated like geological sediment. The team spent two more hours reinventing conclusions they had already reached. The vendor, who had done this dance before, walked away with better terms than the last time.

Companies bleed knowledge this way. Not in dramatic ruptures, but in a slow, constant leak that most organisations have simply accepted as the cost of doing business. Decisions made last quarter are made again without reference to the reasoning that informed them. Experts retire and decades of pattern recognition walk out with them. We all know this happens. What’s strange is how we’ve chosen to treat it: as a culture problem. Launch a knowledge management initiative. Mandate wiki updates. Run retrospectives, record the outputs in documents nobody reads.

The diagnosis is wrong. It isn’t a culture problem. It’s an infrastructure problem. And infrastructure problems have infrastructure solutions.

Knowledge compounds like interest. A team that learns from experience makes better decisions, which produce better outcomes, which feed the next decision. Over time, the gap between a learning organisation and a forgetting one widens every quarter.

The inverse compounds too. Every forgotten lesson must be relearned at the original cost. Most organisations are trapped in this cycle. They create knowledge through projects and experiments, then immediately lose most of it. Whatever survives lives in individuals, not in the organisation. When those individuals are unavailable, the knowledge vanishes.

The ‘90s knowledge management movement promised to fix this. Companies invested in portals, wikis, document repositories, expert directories. It mostly failed, and the reason is revealing: the implicit model was wrong. Knowledge isn’t explicit, documentable, and static. It’s largely tacit, contextual, dynamic, woven into the judgment calls and heuristics and pattern recognition that experts develop over years of making decisions under pressure. Asking people to document this is like asking them to write down how they ride a bicycle.

Software engineering, it turns out, solved an adjacent version of this problem decades ago. The pattern is called event sourcing.

In a traditional database, you store the current state. The account balance is EUR 1,247. How did it get there? You don’t know. In an event-sourced system, you store every event that led to the current state. Account opened. EUR 500 credited. EUR 230 debited. The current balance is derived from the history, and you can replay it, project it differently, audit any point in time.

Organisational memory needs the same architecture. Not a database of current facts (documents, wikis, knowledge bases) but an event log of decisions, outcomes, and observations. Every decision becomes an event, carrying its context, options considered, reasoning applied, choice made, and expected outcome. Every outcome closes the loop.

The event log is structured data. Machine-readable, searchable, composable. “What has been the typical duration of vendor contract negotiations in the manufacturing sector?” isn’t a query you planned for. But if the events carry the right metadata, the query is answerable. The woman in Rotterdam wouldn’t have needed to raise her hand. The system would have surfaced her twelve-page summary before anyone sat down.

The practical shift: stop recording knowledge as documents and start recording it as structured events. Retrospectives should produce structured signals, not meeting minutes. Decisions of consequence should carry their context, reasoning, and expected outcome. Outcomes should close the feedback loop back to the decisions that produced them.

I’ve enforced pieces of this infrastructure in practice, and even partial implementations change the texture of how teams operate.

Event capture comes first. Significant organisational events become structured data: decisions with context and reasoning, outcomes with measurements, customer interactions with resolution notes, project milestones with actuals versus predictions. At a fintech company I visited last winter, every product decision carried a “decision record” with four fields: context, options, choice, expected outcome. Six months later, they could query their own decision history the way a developer queries a git log. “It felt like having a conversation with our past selves,” their head of product told me, still slightly amazed by it.

From there, you build a knowledge graph. Events connect through relationships: this decision was informed by that prior experience; this outcome was the consequence of that decision; this expert contributed to these decisions. The graph answers questions a flat database cannot. “What do we know about this type of problem from previous experience?” becomes a query, not a research project.

Contextual retrieval is where the payoff gets visible. The system surfaces relevant knowledge at the point of decision, not in response to a deliberate search. When a team starts a vendor contract, the system surfaces what the organisation knows about similar contracts: outcomes, complications, what worked. Nobody has to remember to look. The work context itself triggers retrieval.

Pattern extraction emerges as the event log grows, and this is where the compounding effect becomes tangible. Failure modes that recur across departments. Decision contexts that consistently produce poor outcomes. Skills that are systematically underutilised despite being present in the organisation. No single person could hold these patterns across thousands of events, but the system can.

The technology exists today. Vector databases, embedding models, knowledge graphs, large language models that extract structured information from narrative content. The gap isn’t technology. The gap is organisational will to treat knowledge infrastructure with the same seriousness as the servers and pipelines and monitoring dashboards that engineering teams would never dream of going without.

In an agentic enterprise, institutional memory is what separates a useful AI agent from a fast one.

An AI agent that processes a customer complaint in isolation, with no access to what the organisation learned from similar complaints, no visibility into the reasoning behind its policies, is performing a sophisticated text-matching exercise. It can resolve the complaint according to current rules. It can’t tell you that those rules are producing systematically poor outcomes in a specific customer segment, because it has no access to the pattern history.

An AI agent with access to institutional memory is a different participant entirely.

I watched a demo last month where an agent, negotiating a vendor contract, surfaced a pattern from the organisation’s own history: “The last three contracts with this vendor type that included a fixed-price clause resulted in scope disputes averaging 4.2 months to resolve. Contracts with time-and-materials plus cap structure had zero disputes.” The team hadn’t searched for this. The system surfaced it because the work context triggered a relevant pattern. The procurement lead paused, re-read the recommendation, and changed her approach on the spot. “That would have taken us weeks to find manually,” she said afterwards, “if we’d thought to look at all.”

Continuous learning works through the same mechanism. Every decision an AI agent makes, and its outcome, feeds back into the event log in the same structured format as human decisions. When an agent resolves a customer complaint, the resolution, the customer’s response, and the follow-up are all recorded. Over months, the pattern library compounds. The agent’s next decision is informed by every previous decision across every agent and human in the system.

What changes the equation for tacit knowledge is that machine learning can identify patterns in decision history that the decision-makers themselves can’t articulate. “Every time this customer type, with this complaint category, in this product line, was handled with this approach, the outcome was positive 87% of the time.” No human holds that pattern across thousands of interactions. A system can. Once identified, it becomes explicit knowledge, applied consistently across the organisation.

When one cell discovers a better approach, the event log propagates it. Other cells operating on similar work types see the pattern in their contextual retrieval. Knowledge spreads through the system without anyone mandating a wiki update. It just flows, the way water finds its level.

The organisation that gets this right learns faster than its competitors. Not because it has better people or superior technology, but because it has better memory infrastructure. And memory, compounded over time, widens the gap every quarter.

The procurement woman knew this instinctively. She’d done the work. She’d written the summary. The organisation forgot anyway. Start by redefining “done”: a project isn’t complete when the deliverable ships. It’s complete when the learnings are captured as structured events. Then make pattern extraction visible. Then surface knowledge in workflow. The filing cabinet is dead. Build the event log.

Next in the series: Deep Dive #4 — The Quarterly Autopsy: Why your reports arrive after the patient is dead, and how event-driven organisations respond while it still matters